RAG vs LLM Wiki: Why Your Organisation’s Knowledge Strategy Needs Both

For most organisations experimenting with AI, Retrieval-Augmented Generation (RAG) is the default approach for building knowledge systems. It’s fast to set up, works with existing document libraries, and lets large language models pull in real-time context from your data. But RAG has a fundamental limitation: it rediscovers knowledge from scratch on every query.

Enter the LLM Wiki pattern, introduced by Andrej Karpathy in April 2026. Instead of treating every question as a fresh search problem, an LLM Wiki compiles knowledge once, refines it over time, and builds a persistent, structured knowledge base that gets smarter the more you use it. Singapore’s Foreign Minister Dr. Vivian Balakrishnan demonstrated this in practice with his agentic AI “second brain”, using the LLM Wiki pattern to turn his diplomatic briefings, messages, and notes into a living knowledge graph.

For businesses and organisations handling complex, evolving knowledge—policy teams, R&D groups, consulting firms, procurement departments—understanding the difference between RAG and LLM Wiki isn’t academic. It’s strategic.

What is RAG?

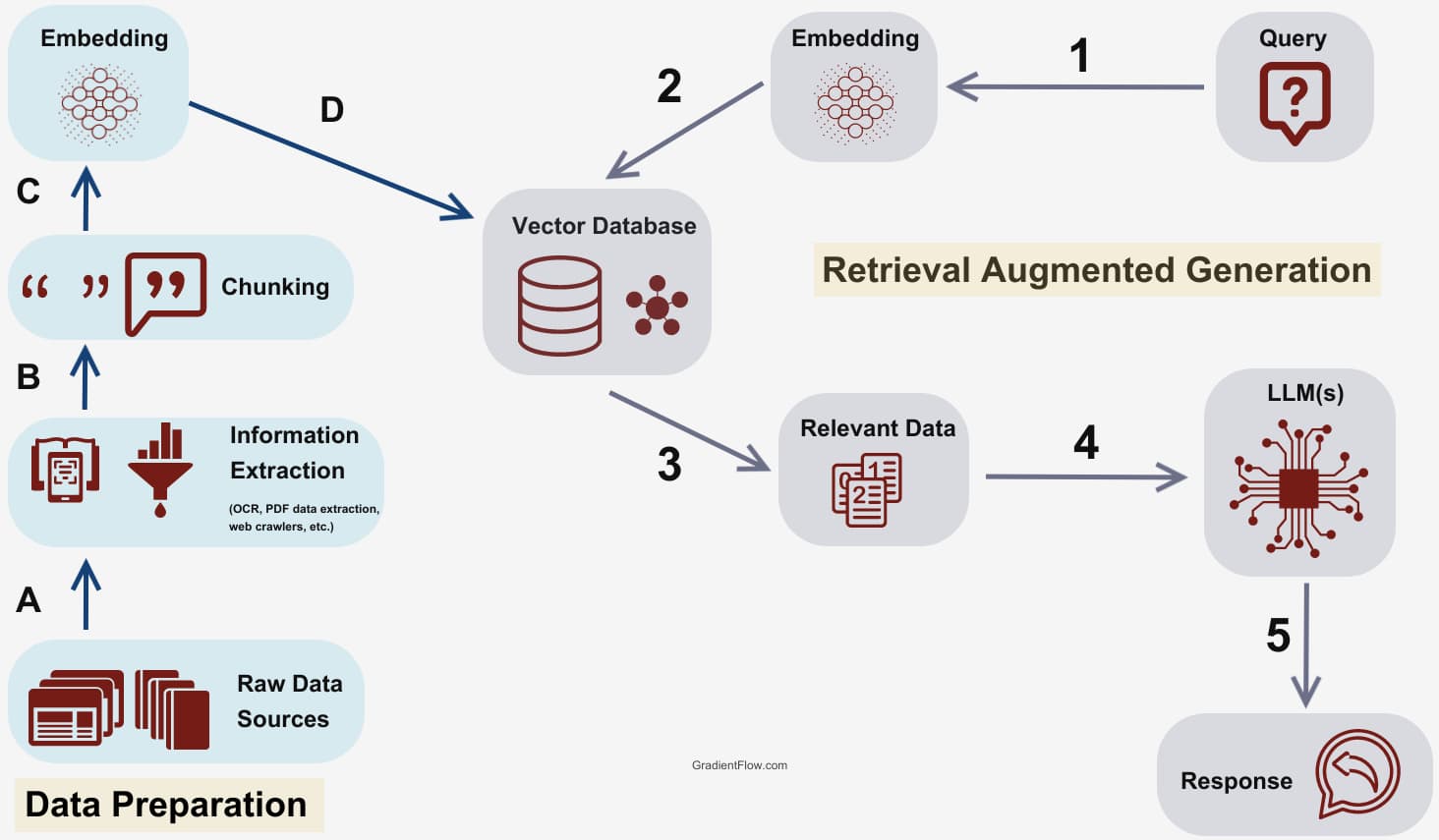

RAG is simple: LLM + Search. When a user asks a question, the system searches a knowledge source (documents, databases, internal wikis), retrieves the most relevant chunks, and feeds them to the language model as context. The model then generates an answer based on that retrieved information.

How RAG works:

- User submits a query

- System converts the query into a vector embedding

- Vector database returns the most semantically similar document chunks

- LLM receives those chunks as context and generates an answer

- Answer is delivered; retrieved context is discarded

RAG excels at:

- Real-time data access – pulling in current product specs, pricing, or regulatory updates

- Handling large, unstructured corpora – searching across thousands of PDFs, emails, or internal wikis

- Quick deployment – you can have a working RAG system in days

What is an LLM Wiki?

An LLM Wiki flips the script. Instead of retrieving raw documents at query time, the system compiles knowledge upfront into a structured, interlinked collection of markdown files. When you add a new source, the LLM reads it, extracts key information, and integrates it into the existing wiki—updating entity pages, revising summaries, and noting contradictions.

How an LLM Wiki works:

- Raw sources (articles, briefings, research papers) are ingested

- LLM extracts structured facts and entities

- Facts are written into persistent markdown “pages” in a knowledge graph

- When a user asks a question, the system queries the wiki (not raw documents)

- New answers refine the wiki, which improves future queries

The key difference: knowledge accumulates. Every query, every answer, every new source strengthens the knowledge base. Over months, the wiki develops institutional memory about your domain.

RAG vs LLM Wiki: The Core Difference

| Dimension | RAG | LLM Wiki |

|---|---|---|

| Approach | Retrieve chunks at query time | Compile knowledge upfront, query structured wiki |

| Knowledge lifespan | Query-scoped (volatile) | Persistent (accumulates over time) |

| Answer quality over time | Static (same retrieval every time) | Improves (wiki refines with each use) |

| Transparency | Limited (vector similarity is opaque) | Full (knowledge is human-readable markdown) |

| Post-query learning | None (context discarded) | Yes (answers update the wiki) |

| Best for | Real-time data, large unstructured corpora | Complex domains, long-term institutional memory |

Use Cases: When to Use RAG vs LLM Wiki

Use RAG when you need:

- Real-time retrieval – customer support systems pulling from live product catalogues or ticketing databases

- High-volume, low-stakes queries – FAQ bots, documentation search, internal helpdesks

- Rapid deployment – you need a working system in days, not weeks

- Broad, shallow coverage – searching across thousands of documents where most queries are one-off

Use an LLM Wiki when you need:

- Deep domain expertise – policy analysis, legal research, R&D knowledge bases where context and relationships matter

- Institutional memory – consulting firms tracking client histories, procurement teams managing vendor relationships, research labs compiling findings over years

- High-stakes accuracy – diplomacy, compliance, strategic planning where wrong answers carry real cost

- Auditable knowledge – systems where you need to trace every claim back to a structured, version-controlled source

Use both when:

A common hybrid pattern is emerging: LLM Wiki handles 80% of queries (the predictable, high-stakes ones), and RAG handles the long tail (the rare, broad, or real-time queries). This gives you compounding knowledge for core domains while maintaining flexibility for edge cases.

What This Means for Your Organisation

1. RAG is a starting point, not the endpoint

RAG gets you to a working AI assistant quickly, but it doesn’t build expertise over time. If your team is repeatedly asking similar questions, RAG is redoing the same work every time. An LLM Wiki, by contrast, turns repeated queries into refined knowledge.

2. Knowledge graphs beat keyword search for complex domains

RAG relies on semantic similarity, which breaks down when relationships between entities matter. If you’re in procurement, policy, or R&D, you need a system that understands how vendors, regulations, and stakeholders connect—not just which documents mention them.

3. Transparency is a feature, not a bug

With RAG, retrieval is a black box: you get a similarity score, but no explanation of why a chunk was selected. With an LLM Wiki, every fact lives in a human-readable markdown file that you can audit, version-control, and refine. For compliance-heavy industries, this is non-negotiable.

4. Compounding knowledge is a competitive advantage

A six-month-old LLM Wiki about your industry, your vendors, or your clients is qualitatively different from a RAG system encountering the same documents every time. The wiki encodes relationships, contradictions, and evolving narratives. Over time, this becomes institutional intelligence that you can’t rebuild from scratch.

Building Your Knowledge Strategy

For most organisations, the right answer isn’t RAG or LLM Wiki—it’s RAG and LLM Wiki, deployed strategically:

- Start with RAG for broad, real-time retrieval (support, documentation, FAQs)

- Build an LLM Wiki for your core strategic domains (vendor intelligence, policy analysis, client histories, research synthesis)

- Integrate both so the wiki handles predictable, high-value queries and RAG fills the gaps

If you’re serious about building a knowledge system that doesn’t just retrieve documents but actively strengthens your organisation’s expertise over time, the LLM Wiki pattern is the blueprint.

Ready to Build a Knowledge System That Actually Learns?

Whether you’re managing complex procurement relationships, building institutional memory for policy work, or creating a research knowledge base that compounds over time, the choice between RAG and LLM Wiki isn’t just technical—it’s strategic.

If you’d like help designing a knowledge architecture that fits your organisation’s needs—whether that’s a hybrid RAG + LLM Wiki system, a self-hosted second brain for leadership teams, or a domain-specific knowledge graph—we can help you build it.

Contact us at [email protected] to start the conversation.